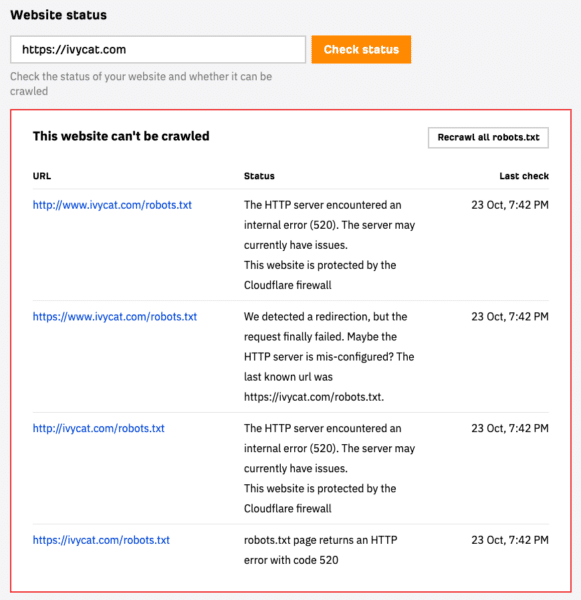

We relocated our site to a dedicated server at WP Engine and found that AhrefsSiteAudit Crawler could not crawl our website. Instead, we got a 502 error for our robots.txt file.

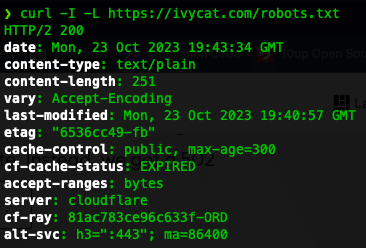

Checking robots.txt access using CURL

Using CURL on the command line, we can see that the URL was available and returning a 200 Found code, which is what we’d expect.

The CURL command to use is:

curl -I -L https://domain.com/robots.txtMake sure to use your site address in place of the https://domain.com.

If you see a response like HTTP/2 200, you can access the file without problems directly from your machine.

You may notice in the above screenshot that the error mentions Cloudflare. Our site uses WP Engine’s Advanced Network, a partnership with Cloudflare, so I suspected that was causing the problem.

Ahrefs has a nifty article on troubleshooting common issues with Site Audit access.

Allow Ahrefs using robots.txt

We made sure to allow Ahrefs in our robots.txt file by adding the following lines:

User-agent: AhrefsSiteAudit

Allow: /

User-agent: AhrefsBot

Allow: /That didn’t fix the problem.

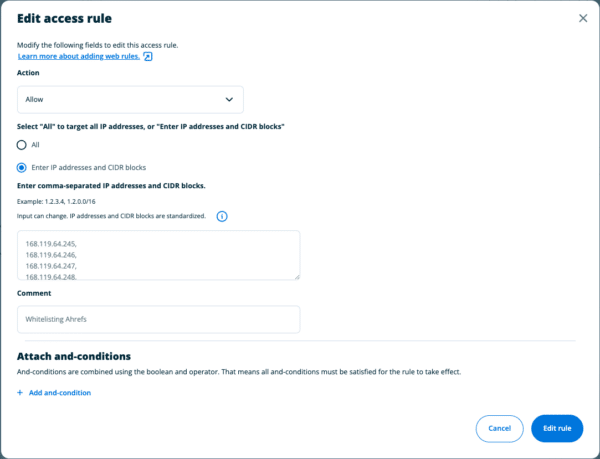

Adding Web Rules at WP Engine to Allow IPs

Then, we collected the Ahrefs IP blocks and addresses we needed to whitelist or allow.

As of this writing, we added the following comma-separated block of IPs to WP Engine’s Web rules.

54.36.148.0/24,54.36.149.0/24,195.154.122.0/24,195.154.123.0/24,195.154.126.0/24,195.154.127.0/24,51.222.253.0/26,168.119.64.245,168.119.64.246,168.119.64.247,168.119.64.248,168.119.64.249,168.119.64.250,168.119.64.251,168.119.64.252,168.119.64.253,168.119.64.254,168.119.65.107,168.119.65.108,168.119.65.109,168.119.65.110,168.119.65.111,168.119.65.112,168.119.65.113,168.119.65.114,168.119.65.115,168.119.65.116,168.119.65.117,168.119.65.118,168.119.65.119,168.119.65.120,168.119.65.121,168.119.65.122,168.119.65.123,168.119.65.124,168.119.65.125,168.119.65.126,168.119.65.43,168.119.65.44,168.119.65.45,168.119.65.46,168.119.65.47,168.119.65.48,168.119.65.49,168.119.65.50,168.119.65.51,168.119.65.52,168.119.65.53,168.119.65.54,168.119.65.55,168.119.65.56,168.119.65.57,168.119.65.58,168.119.65.59,168.119.65.60,168.119.65.61,168.119.65.62,168.119.68.117,168.119.68.118,168.119.68.119,168.119.68.120,168.119.68.121,168.119.68.122,168.119.68.123,168.119.68.124,168.119.68.125,168.119.68.126,168.119.68.171,168.119.68.172,168.119.68.173,168.119.68.174,168.119.68.175,168.119.68.176,168.119.68.177,168.119.68.178,168.119.68.179,168.119.68.180,168.119.68.181,168.119.68.182,168.119.68.183,168.119.68.184,168.119.68.185,168.119.68.186,168.119.68.187,168.119.68.188,168.119.68.189,168.119.68.190,168.119.68.235,168.119.68.236,168.119.68.237,168.119.68.238,168.119.68.239,168.119.68.240,168.119.68.241,168.119.68.242,168.119.68.243,168.119.68.244,168.119.68.245,168.119.68.246,168.119.68.247,168.119.68.248,168.119.68.249,168.119.68.250,168.119.68.251,168.119.68.252,168.119.68.253,168.119.68.254Once you’ve accessed the site in WP Engine’s dashboard, go to Web Rules and add a new access rule to allow traffic from the above IPs.

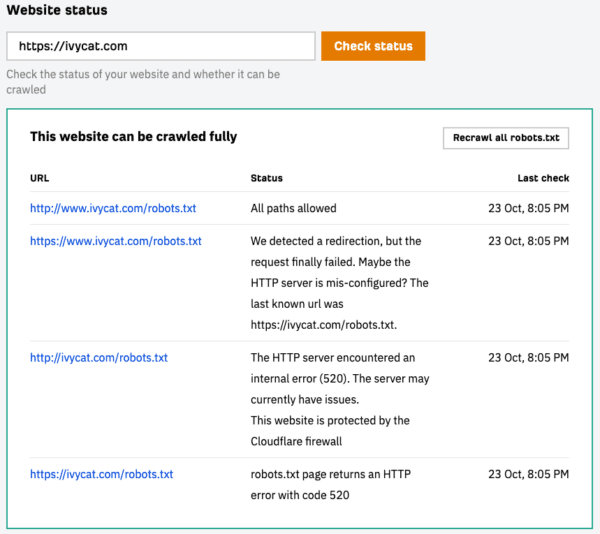

Finally, save the rule, clear your caches, and test. If all is well, you should see something like this:

Take a moment for a happy dance, then return to your search optimization.

If you’re stuck or need help with search engine optimization please let us know.

Spiffy cat photo by Alejandra Coral on Unsplash